We human beings have long manipulated our environments and ourselves. That manipulation includes artificial intelligence.

◊

A sock falls between the washer and dryer, which are too close together for me to reach in with my arm. So, I get one of those grabber things – a reach extender with a pair of little jaws at one and a grip at the other joined by a mechanism that opens and closes the jaw. Potential missing sock mystery solved!

How about this one: I can’t remember the word I want; it’s a synonym for “resplendent.” I pull out my phone and look it up. Yes! That’s the word. Now my article will be an even more elegantly and insightfully crafted work of unparalleled literary genius than it was previously. Most excellent! (I can always dream, can’t I?)

For a fascinating deep dive into the relationship between AI and mortality, watch Artificial Immortality

In both examples, I’ve done something that feels quotidian but is extraordinary. In daily life, I take for granted that my skin is the boundary between the world and me. What I just described, however, is an extension of myself outside the bounds of my skin.

The grabber example might not seem terribly exciting, given that quite a few other animals – including sea otters, elephants, and crows – use tools. But when we consider the second example, in which I’ve effectively offloaded my thinking to the phone, the prospect of bodily transformation is highlighted in some unique ways.

AI: The Basics

An artificial intelligence is a technology that functions on par with, or exceeds, a particular human cognitive function, such as data analysis. This broad definition includes things like calculators. Consequently, it’s easy to see why we can take seriously the idea that any cognitive activity that can be performed on my behalf by a machine is thereby extending my mind.

Things get really interesting when the machine goes beyond the coded restrictions of inputs and outputs. The calculator can’t make any inferences on its own about what could happen with 4 after you tap in 2x2=. It won’t propose a pattern like, 4x2=8, 8x2=16, and so forth. The AI we’re talking about, however, does. But how? How does AI do things like create text and images, make predictions and diagnose illnesses?

Right now, artificial intelligences are “narrow” or “weak,” which means they are task-specific, or mono-intelligences. For example, an AI image generator won’t be able to identify traffic patterns and then use those patterns to make a prediction about when traffic lights should change.

Artificial general intelligence (AGI) or “strong” AI would be able to do anything that a human could. At present, such AI doesn’t exist. Instead, it’s the stuff of science fiction, robots that are functionally indistinguishable from humans.

There are three subsets of AI that computer scientists use to create mono-intelligences, the first of which is also used in the latter two: Machine learning, deep learning, and neural networks. AI pioneer Arthur Samuels defined AI as “the field of study that gives computers the ability to learn without explicitly being programmed.”

Machine Learning

A set of instructions – an algorithm like a decision tree – is applied to large swaths of data. A decision tree model, for example, makes an exhaustive set of predictions or generates classifications based on a set of conditions (the data). For example, the decision tree would answer “true” or “false” to determine the classification of an animal as a raven or crow. Alternatively, the decision tree could be used to make a prediction about someone’s health based on variables like age, weight, and so on.

Neural Networks and Deep Learning

Modeled on the human brain’s neural network structure and function, an AI neural network is a subset of machine learning that involves nodes that receive an input and make a calculation that yields an output. Given that nodes are interconnected and, in what is called deep learning, there are multiple layers of interconnected nodes, the networks can make complex predictions about things like housing prices or binary classifications, such as whether or not an email message is spam.

Remember, however, that these systems are still mono intelligences, which is to say the scope of their amazing capacity to work quickly and accurately with vast amounts of data is limited to a single task. You and I, on the other hand, can learn across tasks – we can learn, for example, not only how to classify species of bird but also predict housing prices and make medical diagnoses. The Holy Grail for AI is, then, AGI, or artificial general intelligence, which is the sort of intelligence we humans have.

The Artificially Intelligent Human

OK, so what does all this have to do with an artificially intelligent human? Well, let’s start by thinking about something we take for granted. Nowadays, for example, it’s common to forget phone numbers. I still remember my home phone number from childhood, but that was long before we could offload that storage to a computer that we hold in our hand. Ask me the number of a friend with whom I speak by phone regularly, and I won’t even be able to tell you the area code, let alone the other digits.

“Where does the mind stop and the rest of the world begin?” That’s the question Andy Clark and David Chalmers ask in their 1998 paper, “The Extended Mind.” Though the extended mind thesis is not original to them, Clark and Chalmers argue that features of the external world are integrated into our cognitive processes.

Before the proliferation of digital tools in our daily lives, we’d write down phone numbers. A pen and paper were the tools for extending our cognition, which brings us back to the grabber example and provides a way for us to orient our thinking about how AI has integrated with humans and will likely do so more extensively in the not-so-distant future.

“We Can Rebuild Him”: Prosthetics

The ancient Egyptians were the first people, so far as we know, to make prosthetic limbs. The “Cairo toe,” which could be as old as 3,000 years, is thought to be the oldest prosthetic. Fast-forward to today. Artificial limbs are made out of materials that are both durable and lightweight, which are a far cry from the wood and leather used for the Cairo toe and countless other prosthetics over the centuries.

More extraordinary still, some researchers have created knee and ankle prosthetics with built-in software to facilitate a natural gait by anticipating the user’s intended movement. Other researchers are working to harness the brain’s electrical signals to an interface in, for example, a prosthetic hand.

This artificial arm was made by the William Robert Grossmith Company, which manufactured artificial limbs in the 1800s. (Source: Wikimedia Commons)

This artificial arm was made by the William Robert Grossmith Company, which manufactured artificial limbs in the 1800s. (Source: Wikimedia Commons)

When someone loses a limb, it’s not as if the brain stops sending the electrical signals that run along nerves to control muscle movement – hence the “phantom limb” phenomenon. Small muscles are grafted to the ends of the nerves in the severed limb, and the interface essentially communicates the signal to the prosthesis. The goal is for the think-act sequence to become more intuitive.

Back in the 1970s there was a TV show called The Six Million Dollar Man, in which the main character, Steve Austin, was equipped with artificial body parts that gave him superhuman capabilities. At present, there are no such bionic replacements powered by artificial intelligence, but it’s not hard to see how organic human materials are increasingly replaceable with inorganic and, in some cases, computer technology. And it’s going to cost a lot more than six million dollars.

Exoskeletons

You don’t have to be an invertebrate or fight off murderous creatures like Ripley in 1986’s Aliens in order to don an exoskeleton – a skeleton outside the body. They already exist. There are already various uses for exoskeletons – like medical, military, and industrial – which is a skeleton on the outside of a body. The main function of the wearable device is to augment, extend, or assist natural capacities.

A person with Parkinson’s disease, for example, may suffer from gait freezing, in which the person’s body effectively locks up in mid-stride. A soft exoskeleton pushes the hips as the leg swings forward in a walking gait, thereby eliminating the freeze, which can result in injury.

An elderly person walking with the aid of an exoskeleton (Source: StockCake, generated by AI)

An elderly person walking with the aid of an exoskeleton (Source: StockCake, generated by AI)

Artificial intelligence combines with the power of an exoskeleton by way of predictive adaptation and support. For instance, AI can anticipate repetitive motions, thus relieving body parts of the stress and strain caused by such movements.

Replacement Parts

I had a knee replacement, which gave me first-hand experience of one of the ways human body parts are being replaced with inorganic materials. Another example is the pacemaker, which does important work to regulate heart rhythms through electrical impulses. Then there’s the Artificial Pancreas Device System, a multi-device machine that uses algorithms to continually monitor glucose levels and determine insulin doses. You can imagine how these sorts of replacement parts are likely to proliferate as AI technology advances in the medical world.

Neural Interfaces

Perhaps the most striking way in which AI and humans are merging is the brain-computer interface (BCI), one of several types of neural interface. Recall the prosthetic that connects nerve endings to move muscles? Well, that’s a neural interface. Now think about a device implanted in the brain, or electrodes placed on the brain or inside the skull but outside the brain. This is a BCI, which works with the nervous system to directly control devices like computers – no hands or voice required.

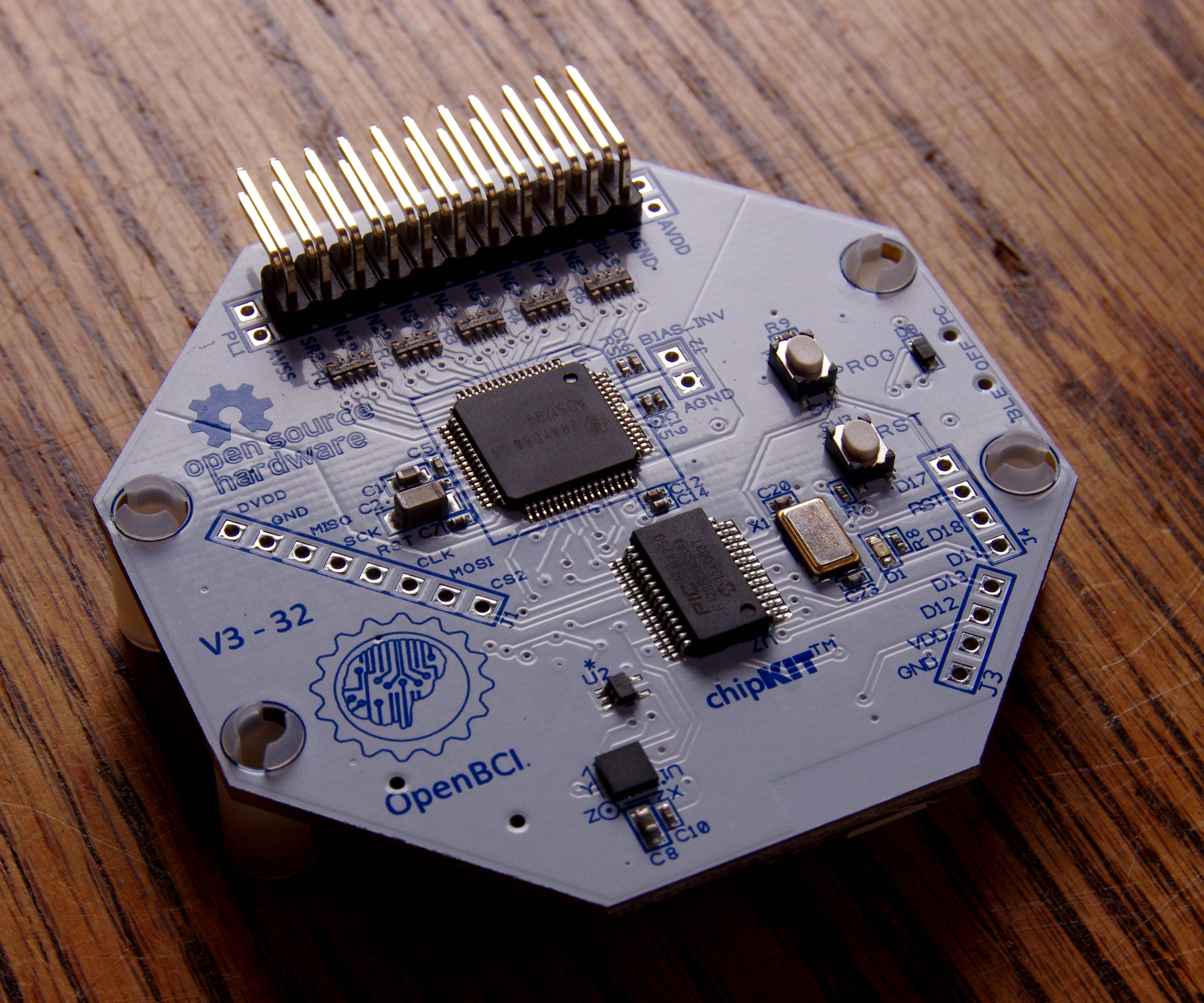

An open-source brain-computer interface (Source: Wikimedia Commons)

An open-source brain-computer interface (Source: Wikimedia Commons)

Now add artificial intelligence to the mix. As one article puts it, “Artificial intelligence (AI), which can advance the analysis and decoding of neural activity, has turbocharged the field of BCIs.” People disabled by neurodegenerative disease or brain injury can benefit enormously from such devices. In 2023, for example, a clinical trial participant, who had lost the ability to speak, was able to communicate 62 words a minute on a computer “simply by attempting to speak.” That accomplishment is basically just one step away from thought-to-text.

Nanotechnology

Things arguably get more interesting when AI is leveraged in nanotechnology, which works on a teeny tiny scale – as small as individual atoms and molecules. (A nanometer is one billionth of a meter.) Engineers specializing in nanotechnology build structures and devices that can enhance medical imaging. Scientists working in this field can manipulate or transform matter. In medicine, such work includes things like nanofiber wound dressing.

In theory, devices like the nanobot, which is an AI robot, will be able to travel through the body for repair work or drug delivery. AI is also likely to improve the production process of nano materials and the quality of the materials themselves.

.jpg)

An artist’s conception of a nanobot (Credit: Alexander Limbach)

An artist’s conception of a nanobot (Credit: Alexander Limbach)

The Singularity

According to computer engineer and futurist Ray Kurzweil, human consciousness will merge with AI – and it will be good. Why? Well, given what we know about the brute processing power of artificial intelligence, the human capacity for knowledge and creativity will increase accordingly. Want to speak a new language? Or how about every language there is? No problem.

From Kurzweil’s point of view, the singularity – that hypothetical moment when AI surpasses human intelligence – is not to be feared. There won’t be any science fiction version of AI taking over humanity. Apparently, according to Kurzweil, this merging will occur through nanobots traveling through our brain’s capillaries and digital neurons stored in the cloud – that is, computer data storage.

We probably shouldn’t get caught up in arguing over whether or not predictions like Kurzweil’s will come to pass. After all, we humans have always worked to improve and extend our lives. The rapid development and spread of digital technology highlights that effort. It’s up to us to think carefully now about what we are trying to do with it.

Ω

Mia Wood is a philosophy professor at Pierce College in Woodland Hills, California. She is also a MagellanTV staff writer interested in the intersection of philosophy and everything else. Among her relevant publications are essays in Mr. Robot and Philosophy: Beyond Good and Evil Corp (Open Court, 2017), Westworld and Philosophy: Mind Equals Blown (Open Court, 2018), Dave Chappelle and Philosophy: When Keeping it Wrong Gets Real (Open Court, 2021), and Indiana Jones and Philosophy: Why Did It Have to be Socrates? (Wiley-Blackwell, 2023).

Title image source: Pixabay